AI made it cheap to send a thousand "personalized" emails an hour. That is exactly why personalization stopped working. The teams winning B2B outbound in 2026 send 80% fewer emails — and use AI for the things humans were never doing in the first place.

On a Tuesday morning in 2026, a director of revenue operations at a mid-market SaaS company opens her inbox. Six emails compete for her attention before her first coffee. Five of them open with some variant of "I loved your point about pipeline coverage on the Modern Sales podcast last month." All five reference the same five-second clip. All five are obviously written by the same model with slightly different prompts. She does not reply to any of them. She does not even open the third one — by then, the pattern has been recognized, and the rest of the inbox is filtered as noise.

This is the Templated Tell: the structural giveaway in AI-generated outbound that buyers now recognize within a sentence. The opener cites a piece of public information. The second sentence pivots awkwardly to a value prop. The signature contains a calendar link with a UTM parameter. Every email in the genre shares the same seven-beat rhythm, because every team is using a slight variation of the same prompt against a slight variation of the same model. The "personalization" is real in the technical sense. It is also irrelevant in the buyer's sense — because it is no longer a costly signal.

For two decades, a hand-written reference was a costly signal. It said: a human spent fifteen minutes on you specifically. That signal is what made personalization work. AI did not break personalization by writing bad copy. AI broke personalization by removing the cost of the signal.

The economics of a costly signal, briefly

In every market that runs on attention, the things that work are the things that are expensive to fake. A reference letter from a respected operator works because it is hard to get. A handwritten note works because it cannot be sent in bulk. A specific, weird, accurate observation about a buyer's public earnings call works because it requires real attention. The moment any of these become cheap to fake — letterhead generators, mail-merge handwriting, AI-summarized earnings calls — they stop working. Not gradually. Sharply.

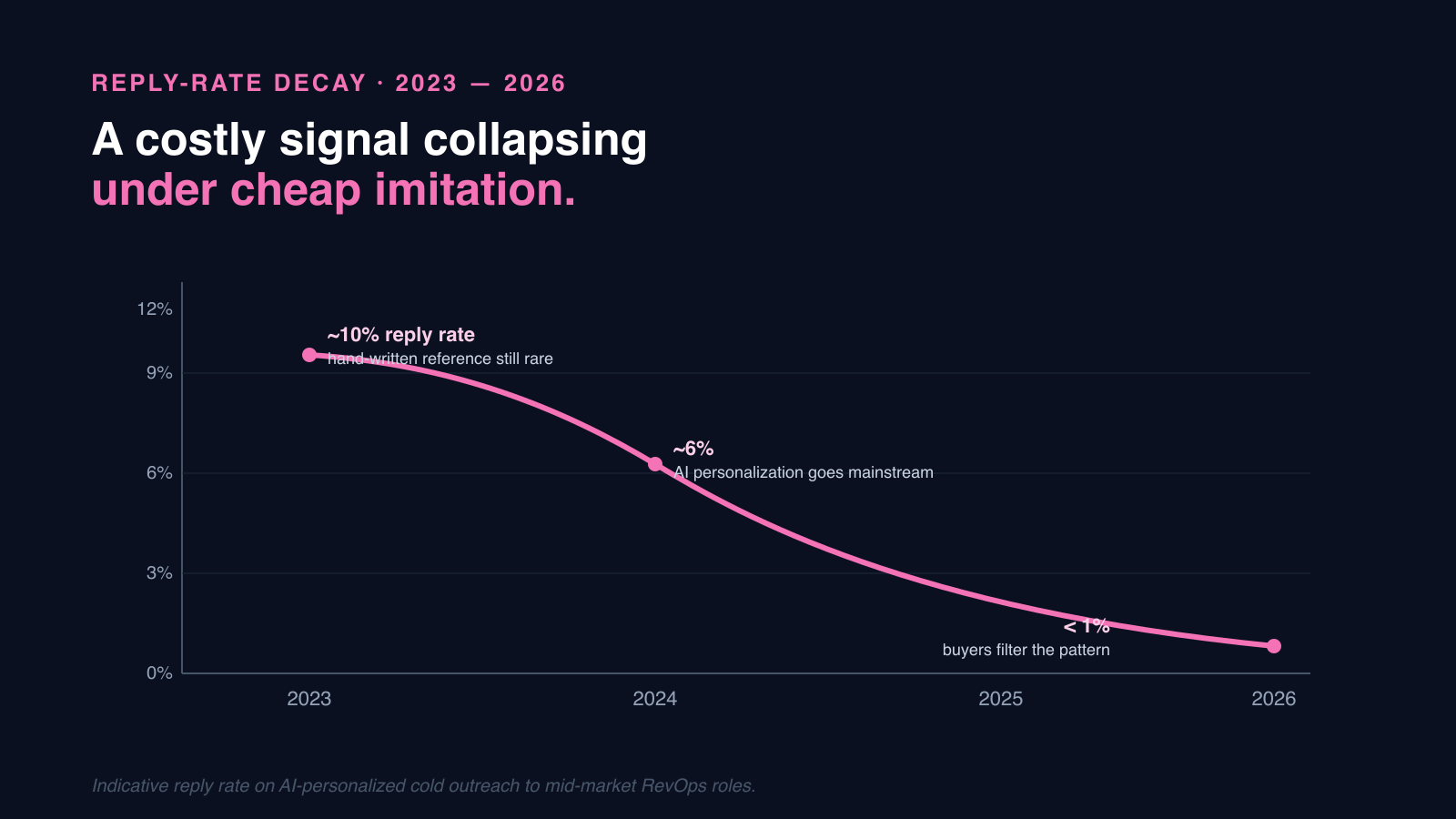

Outbound went through this transition in roughly eighteen months. In 2023, an AI-generated reference to a podcast appearance booked meetings. In 2024, it underperformed human-written outreach. In 2025, buyers were filtering it as spam. The reply-rate decay curve was not linear. It looked exactly like a costly signal collapsing under the weight of cheap imitation. The fix is not better prompts. The fix is finding the next costly signal.

A costly signal collapsing under cheap imitation.

The Subtraction Strategy

The teams that beat their pipeline target consistently in 2026 share one structural choice: they send dramatically less outbound, to dramatically better-fit accounts, with dramatically more attention per send. We call this the Subtraction Strategy because it is built around what the team chooses not to do.

The instinct in 2024 was that AI would let SDRs send 1,500 emails a day instead of 80. The math seemed obvious — same reply rate, twenty times the volume, twenty times the meetings. What actually happened is that the reply rate did not stay constant. Each additional badly-targeted email trained buyers to filter harder, hurt sender domain reputation, and eroded the team's ability to land the few legitimately well-targeted emails it was sending. Volume did not lift meeting count. It collapsed it.

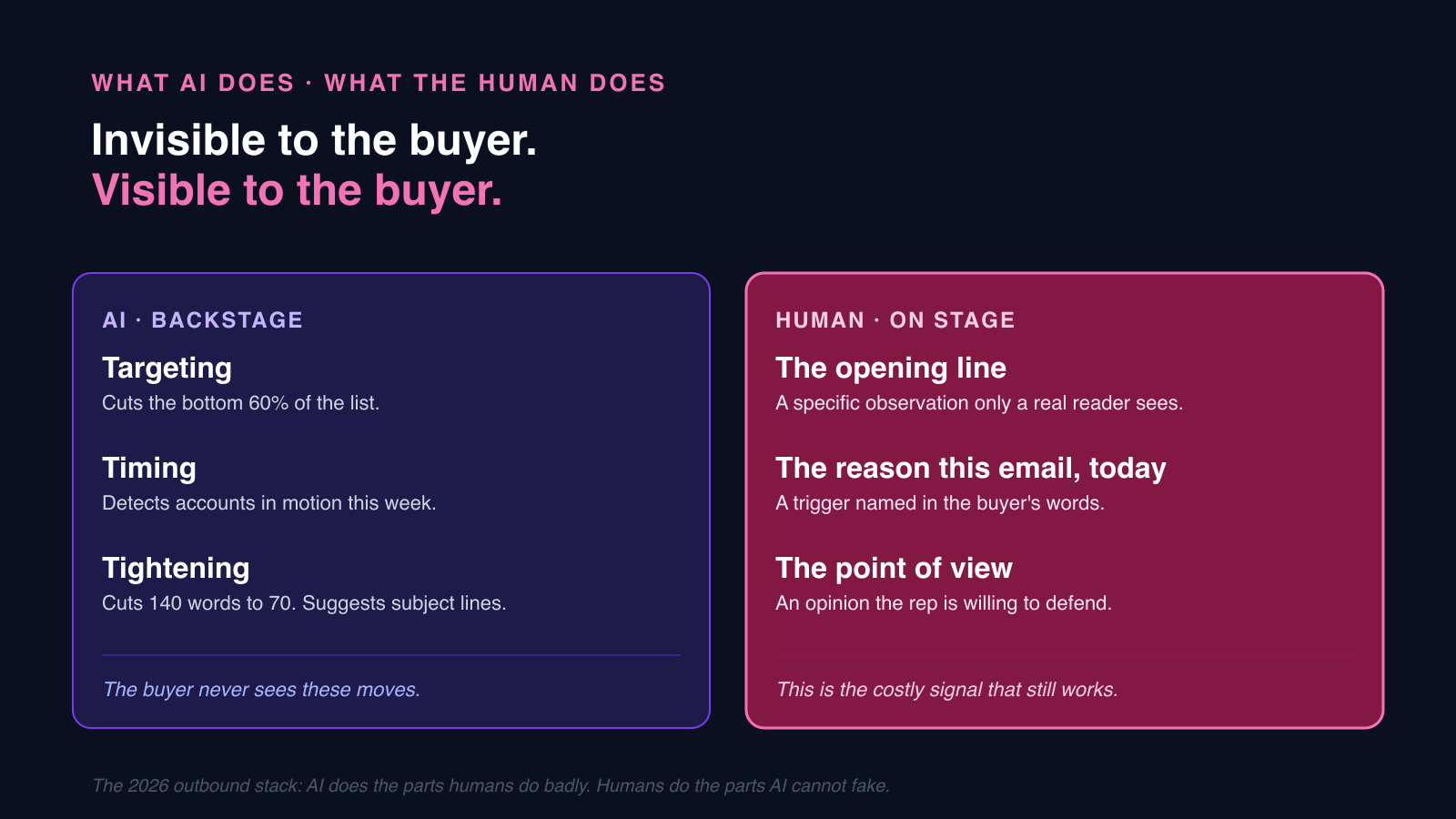

Subtraction reverses this. Cut the list by 80%. Increase real research time per account by ten times. Use AI for the parts of the workflow buyers cannot see — the targeting upstream, the timing detection, the structural rewrite at the end. Leave the parts buyers can see — the opening line, the specific observation, the reason this email is being sent today — to the human who actually read the account.

Where AI actually earns its keep in outbound

AI is uniquely good at three things in outbound, and all three are invisible to the recipient. Most teams use it for the one thing it is bad at — the visible part — and then complain that personalization is dead.

AI does the parts humans do badly. Humans do the parts AI cannot fake.

Targeting: deciding who not to email

Most outbound lists contain 60–70% of accounts that were never going to close, regardless of message quality. Wrong segment, wrong stage of company, wrong tech stack, wrong geography, no presence of the role that owns the problem. AI is unreasonably good at predicting this from firmographics, technographics, hiring signals, funding patterns, and the historical shape of your closed-won data. Cutting the bottom 60% off the top of the list is the single largest lever in outbound, and it is the one humans hate to pull because it feels like leaving meetings on the table. It is, in fact, leaving distractions on the table.

Timing: deciding when an account is in motion

A new VP of RevOps starts their job. A competitor loses a marquee customer publicly. A CFO mentions efficiency four times on an earnings call. A target account opens three roles in adjacent functions in the same week. These are the moments outbound actually works, because the account is in a state change and the buyer is briefly receptive. AI can monitor thousands of accounts for these triggers continuously and surface eight of them in a rep's queue on a Monday morning, replacing the 800-account list the rep used to triage by gut. The rep's job is no longer prospecting. It is responding to motion.

Drafting: the second sentence, not the first

The opening line of an outbound email is now the most expensive sentence in B2B sales — because it has to do something AI cannot. It has to communicate, in fewer than fifteen words, that a specific human spent specific attention on this specific account. AI is poor at this and getting worse as buyers learn the patterns. AI is, however, excellent at the structural work that comes after: rewriting the rep's rough sentence into tight prose, varying length, cutting a 140-word email to 70, generating three subject-line variants, catching the moment the rep slipped into category jargon. That is where reply rates move — and that is the work the rep does not have time to do well alone.

Note The swap-the-name test

If your AI-generated email could be sent to a different account by changing only the company name, it is not personalized. It is templated, and the buyer can tell within a sentence. Apply this test to every email shipping from your stack this week.

How RevOps reorganizes outbound around subtraction

The structural change that makes the Subtraction Strategy actually work is in how SDR work gets measured. As long as SDRs are paid on send volume or activity, AI will be used to inflate volume. The moment they are paid on meeting quality and downstream pipeline conversion, the math flips and AI gets used for filtering and timing — because that is what produces the metric.

- Replace volume quotas with meeting-quality quotas. Track reply-to-meeting and meeting-to-pipeline, not send-to-reply. The send number stops being a goal and becomes a constraint.

- Build a tight ICP scoring model from closed-won data and refresh it quarterly. The output is not a score; it is a daily list of 30 accounts per SDR.

- Run trigger monitoring as a service to the SDR team. Hiring, funding, leadership change, public mentions of pain words, tech-stack moves. The trigger queue replaces the prospecting queue.

- Audit a sample of sent emails weekly. Apply the swap-the-name test. If a meaningful share fail, the team is back to templated outbound and the metrics will follow within two weeks.

- Move the SDR comp plan toward sourced-pipeline-quality instead of meetings booked. Bad meetings cost the AE more than they cost the SDR; the comp plan should reflect that.

What changes after one quarter

Teams that make this shift see send volume drop by 60–80% and meeting count stay flat or grow. The reply rate per send rises sharply, but the more important effect is downstream: the meetings that get booked are with better-fit accounts, which then convert through the pipeline at materially higher rates than legacy outbound. The economics of the SDR seat change in two ways at once. Fewer seats are needed for the same pipeline number, and the seats that remain are higher-leverage — closer to a researcher with judgment than a quota-carrying volume engine.

There is also a reputational effect that is harder to quantify and arguably more durable. Sending domains stop tripping spam filters. Buyers stop reflexively filtering anything that smells like outbound from the team's domain. The rare email that does land is read on its merits, instead of being filtered before it is opened. This is not a tactic. It is a rebuilding of trust at the level of the channel itself, and it compounds quietly over quarters.

The deeper bet

The lesson here is the inversion of how most teams thought about AI in 2024. The point of AI in outbound is not to do more of what humans were doing — that path leads to faster spam and lower trust. The point of AI is to do the parts humans were not doing well at all: targeting at scale, timing detection across thousands of accounts, structural rewriting under deadline pressure. Leave the actual personalization to a human who actually read the account, because that is now the costly signal that buyers reward.

Cheap signals stop working when they become cheap. Costly signals work because they are expensive to fake. AI changes which signals are which. The team that adapts first wins the inbox; everyone else is competing on a curve that flattens every quarter.

Send fewer outbound emails. Book more meetings.

Brixi combines account scoring, trigger monitoring, and conversation intelligence so SDR teams spend their time on the 30 accounts that will actually answer this week.

See Brixi for B2B Sales