Every B2B team logs loss reasons. Almost none of them coach from them. The gap between when a deal is actually lost and when the rep hears anything useful about it is the single biggest source of repeat losses in B2B sales — and AI quietly closes it.

It is 4:47pm on a Friday. A rep updates an opportunity to closed-lost, picks "Budget" from a dropdown, types "moving forward Q3" into the notes field, and closes the tab. The deal was actually lost six weeks ago, on a 22-minute call where the buyer asked a sharp question about implementation cost and the rep gave a soft answer. The rep does not remember that moment. The manager never heard the call. The "Budget" loss will sit in a quarterly report next to forty others, and the team will lose six more deals to the same unanswered question before anyone notices.

This delay between the moment a deal is actually lost and the moment the team learns anything actionable from it is what we call the Coaching Lag. It is the silent killer of B2B win rates. Most loss data exists. Most of it is even, technically, accurate. It is just so far removed from the next sales call that it cannot change behavior. AI is the first technology cheap enough to compress that lag from months to days.

Why the loss-reason dropdown lies

Spend a week reading closed-lost transcripts in any B2B org and the same illusion shows up everywhere. The reasons reps log are not wrong, exactly. They are politically convenient summaries written under deadline pressure, by the person with the strongest incentive to present the loss as inevitable. "Budget" reads as not the rep's fault. "Timing" reads as not the rep's fault. "Went with a competitor" reads as a price problem rather than a positioning problem. None of these labels survive contact with the actual conversation.

Read the transcripts and a different story emerges. The "Budget" loss was usually a stakeholder problem — the rep never reached the person who could have signed the check. The "Timing" loss was usually a discovery problem — the cost of the status quo was never quantified, so waiting always looked cheaper than buying. The "Went with competitor" loss was usually a framing problem — the competitor wrote the requirements list, and the rep walked into a comparison they had already lost. The dropdown captures the symptom. The recording contains the diagnosis. Until very recently, nobody had time to read all the recordings.

- Loss reasons are picked at the moment of lowest information density: when the rep has already moved on emotionally.

- They are filtered through what the rep wants the manager to see, not what would help the next rep on the next call.

- No two reps map the same situation to the same dropdown value, so quarterly aggregates are noise.

- The single conversation that decided the deal usually happened weeks before the loss was logged — and was never reviewed.

- Manager loss reviews tend to anchor on the last call, where the deal was already gone, instead of the discovery call, where it could still have been saved.

Closed-won is the curriculum. Closed-lost is the test.

The mistake most teams make with AI in coaching is pointing it at losses first. Losses are diagnostically rich, but they are a noisy signal — a hundred things can go wrong in a deal, and the model has to figure out which one mattered. Wins are quieter and far more useful. They show, with low variance, what your team's repeatable winning behavior actually looks like: how deep discovery has to go, how many stakeholders have to be in the room, how fast next steps have to be confirmed, what objections get reframed instead of accepted.

A useful AI coach is calibrated on the curriculum first. It learns the shape of a winning deal in your business — not in B2B in general — and then grades every active opportunity against that shape. The result is not a "good rep / bad rep" score. It is a per-deal map of where this specific deal has drifted from the patterns that produce wins, and what one move would pull it back.

Discovery depth, scored call by call

Did the rep ask about the cost of the status quo, in numbers? Were the questions about feature mechanics, or about decision mechanics — who signs, who blocks, what has to be true to move? Discovery quality has the highest correlation with eventual close rate of any single dimension we see, and it is the easiest one for AI to grade objectively. Most teams find that 30–40% of their first calls would be flagged as shallow against their own closed-won baseline. Those are the deals that quietly drift to closed-lost three months later under the label "Timing".

Two questions, same call, very different deal trajectories.

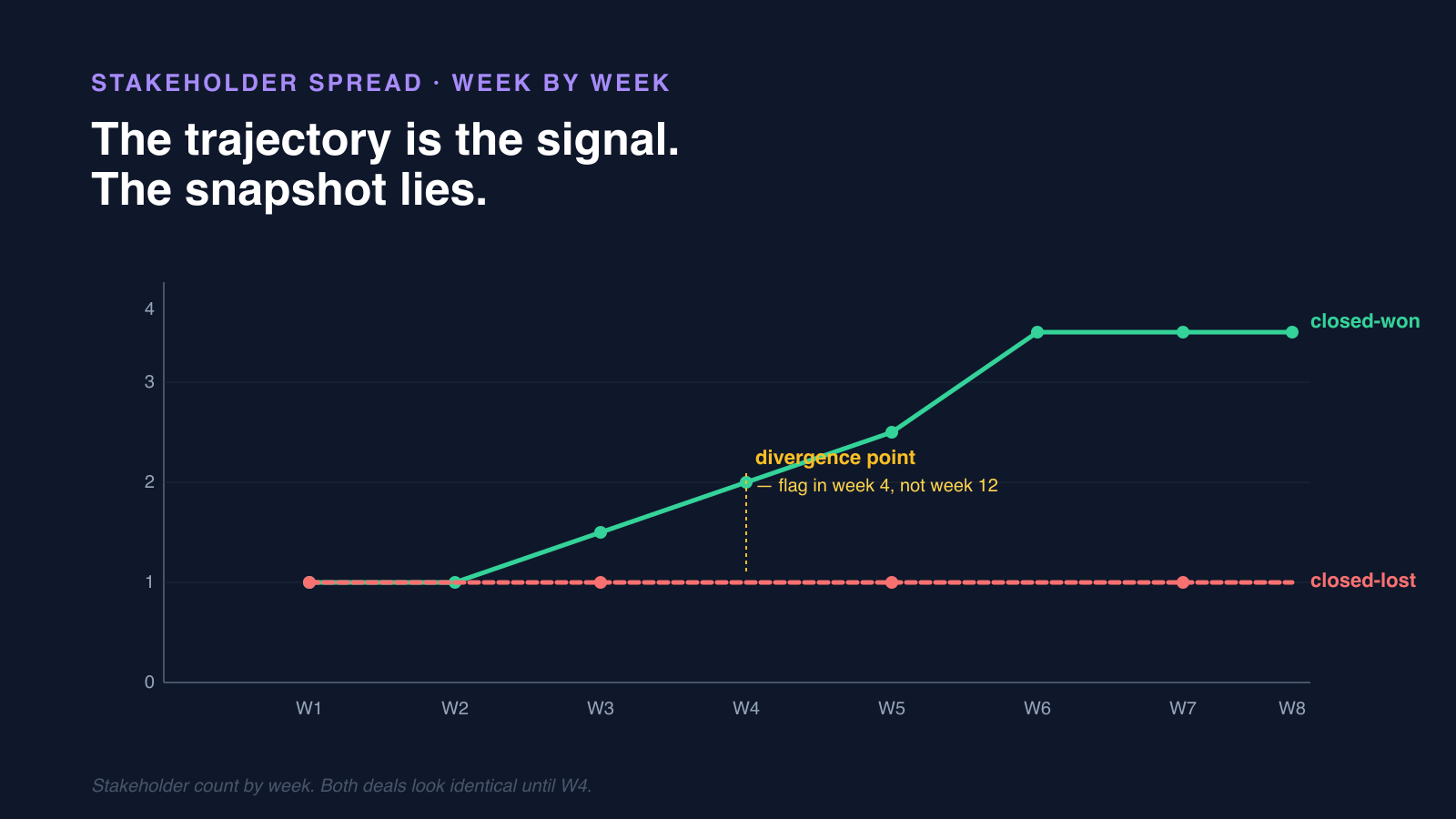

Stakeholder spread, week by week

Closed-won deals in most B2B segments show three or more named stakeholders by week six. Closed-lost deals look identical until week six and then plateau on a single champion. The trajectory is the signal, not the snapshot. AI watching this curve in real time can flag the at-risk deal in week five, when there is still a play — a CFO intro, a security review request, a peer reference call. By the time a human notices, the champion has already gone quiet and the deal is unrecoverable.

The trajectory is the signal. The snapshot lies.

Objection responses, scored against winning patterns

When the buyer pushed back on price, integration scope, or implementation effort, did the rep reframe, capitulate, or go silent? Reps remember the objections they handled cleanly and forget the ones where they trailed off. The transcript does not. Closed-won data tells you which reframes work in your category — "this is a switching cost, not a pricing problem", "the integration we already did with X is the hard part" — and AI can flag, on the next live call, when one of those reframes was needed and missed.

Note Reporting tells you what happened. Coaching tells the rep what to do on Tuesday.

A loss-reason dashboard is interesting. A coaching cue tied to the next scheduled call is operational. The first informs strategy quarterly. The second moves win rate weekly. Most teams have invested in the first and called it AI coaching.

Three rules for rolling AI coaching out without breaking trust

AI coaching has a short trust runway with reps. The moment it becomes a manager scorecard, reps stop calling — or worse, they start performing for the model, padding discovery questions to score well instead of to actually understand the buyer. The teams that make this stick treat the coach as a tool the rep owns, not a system the manager runs.

- Show the rep the cue before the manager sees it. Same data, sequenced differently. The rep gets to act on the cue before it shows up in a roll-up.

- Tie cues to the next call, not the last one. "Multi-threading is at one stakeholder — book a CFO intro this week" is operational. "Discovery was shallow on opp #4421" is a postmortem.

- Aggregate patterns at the team level, never the rep level, in cross-functional reviews. Individual transcripts stay in the rep-and-direct-manager loop. The moment marketing or finance can see a rep's coaching scores, the system is dead.

- Calibrate the model on closed-won first, so reps see the cue list as the recipe for repeatable wins, not a critique built from their losses.

What changes when the Coaching Lag closes

Teams that operationalize this see two distinct shifts. The first is mechanical: win rate moves first on the deals where the coach flagged a discovery or stakeholder gap in week three. Those gaps are cheap to fix early and impossible to fix late. Average sales cycle drops second, because reps stop running the same losing play through three more calls before admitting the deal is gone.

The second shift is structural and bigger. Loss-reason data finally becomes useful, because it is no longer a self-report. Instead of "Budget: 38%" — a number nobody can act on — the team gets "in 24 of these losses, the economic buyer was never in a call before week eight". That is a fixable problem with a named owner: SDR-to-AE handoff, multi-threading enablement, exec-sponsor program. The data points at a play.

And the deeper change shows up in the QBR itself. Loss reviews stop being a confession booth — the rep defending the dropdown they picked — and start being working sessions on a small set of recoverable, in-flight deals. The conversation moves from "why did we lose?" to "what specifically would we do differently on the next one, this week?" That is the conversation that compounds.

The deeper bet

Underneath the tooling, AI deal coaching is a bet on a different theory of how B2B teams improve. The old theory was that a few star reps developed instincts that could not really be transferred, and the rest of the team learned by osmosis over years. The new theory is that the instincts are real but the patterns are extractable — and once they are extracted, every rep can have access to them on every call. That is what closes the Coaching Lag. Not faster reports. A different operating model for what a sales organization actually is.

Turn every closed-lost into a coaching cue the rep can act on

Brixi reads conversation, engagement, and CRM data together so RevOps teams ship coaching the rep can act on this week, not a dashboard the rep ignores next quarter.

See Brixi for RevOps